Write-Host outputs information to the console via the information stream.( Get-ChildItem -File *.tar.gz.a ).FullName | ForEach-Object will pipe all of the filenames from the FullName values of the previous expression and iterate over each fully-qualified path.

This code is also 100% executable in PowerShell Core and should work on Linux: Push-Location $pathToFolderWithGzips You can extract all of the archives with a loop in one go (with some helpful output and result checking). Just pass the file path to tar like this, adding any additional arguments you need such as controlling output directory, etc.: tar xvzf "$.aa" Even if you had the available memory I don't know how performantly Get-Content would handle single files that large. Get-Content is not designed to read binary files, it's designed to read ASCII and Unicode text files, and certainly not 10GB of them. You were getting the OutOfMemoryException due to this. You are calling cat (an alias for Get-Content) to enumerate the contents of a single file and then attempting to pass the parsed file content to tar. Thanks in advance! Would be happy to know of any solutions for linux as well, if anyone else might be facing a same difficulty. + FullyQualifiedErrorId : CommandNotFoundException + CategoryInfo : ObjectNotFound: (zcat:String), CommandNotFoundException The spelling of the name, or if a path was included, verify that the path is correct and try again. Zcat : The term 'zcat' is not recognized as the name of a cmdlet, function, script file, or operable program. + FullyQualifiedErrorId : System.OutOfMemoryException, + CategoryInfo : NotSpecified: (:), OutOfMemoryException tar.gz.aa | tar xzvf -Ĭat : Exception of type 'System.OutOfMemoryException' was thrown. I've tried the following commands in my powershell(with admin rights): cat. tar.gz.an each file being around 10 GB in Windows?

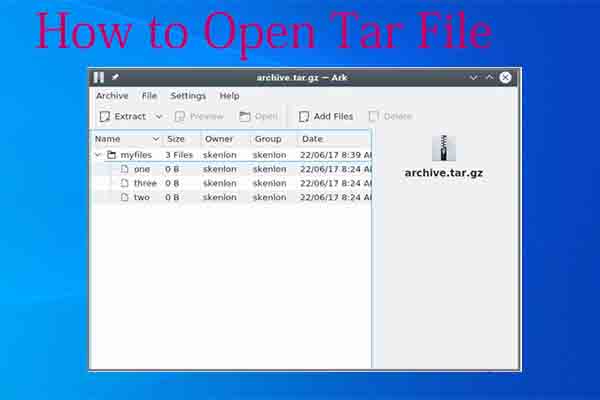

How to untar multiple files with an extension.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed